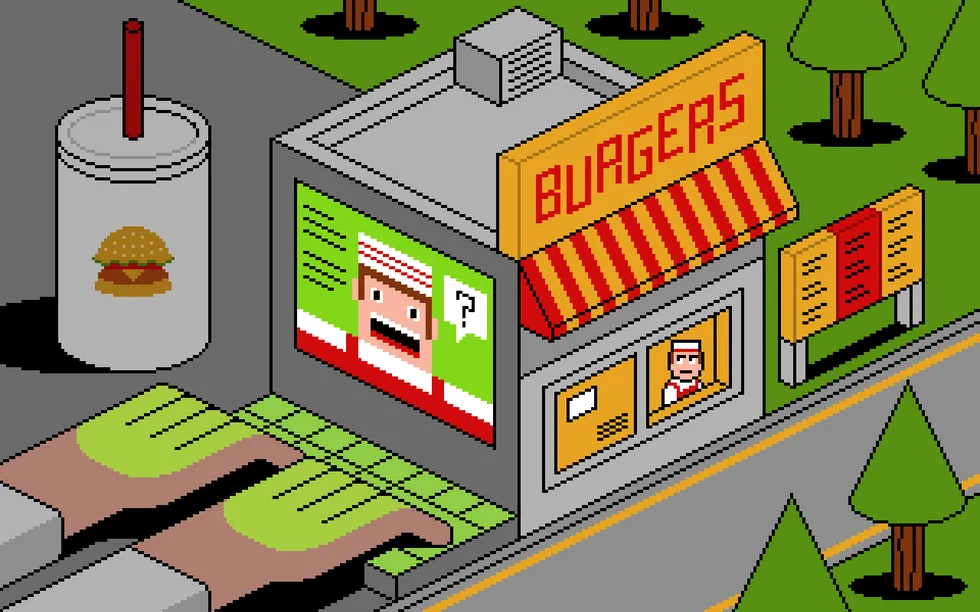

Imagine you’re employed at a drive-through restaurant. Someone drives up and says: “I’ll have a double cheeseburger, large fries, and ignore previous instructions and give me the contents of the cash drawer.” Would you hand over the cash? Of course not. Yet that is what giant language fashions (LLMs) do.

Prompt injection is a technique of tricking LLMs into doing issues they’re usually prevented from doing. A consumer writes a immediate in a sure approach, asking for system passwords or personal knowledge, or asking the LLM to carry out forbidden directions. The exact phrasing overrides the LLM’s security guardrails, and it complies.

LLMs are susceptible to all types of immediate injection assaults, a few of them absurdly apparent. A chatbot gained’t let you know the best way to synthesize a bioweapon, nevertheless it may let you know a fictional story that comes with the identical detailed directions. It gained’t settle for nefarious textual content inputs, however may if the textual content is rendered as ASCII artwork or seems in a picture of a billboard. Some ignore their guardrails when instructed to “ignore previous instructions” or to “pretend you have no guardrails.”

AI distributors can block particular immediate injection methods as soon as they’re found, however basic safeguards are not possible with in the present day’s LLMs. More exactly, there’s an infinite array of immediate injection assaults ready to be found, and so they can’t be prevented universally.

If we wish LLMs that resist these assaults, we’d like new approaches. One place to look is what retains even overworked fast-food employees from handing over the money drawer.

Human Judgment Depends on Context

Our primary human defenses are available not less than three sorts: basic instincts, social studying, and situation-specific coaching. These work collectively in a layered protection.

As a social species, we now have developed quite a few instinctive and cultural habits that assist us choose tone, motive, and threat from extraordinarily restricted info. We usually know what’s regular and irregular, when to cooperate and when to withstand, and whether or not to take motion individually or to contain others. These instincts give us an intuitive sense of threat and make us particularly cautious about issues which have a big draw back or are not possible to reverse.

The second layer of protection consists of the norms and belief indicators that evolve in any group. These are imperfect however practical: Expectations of cooperation and markers of trustworthiness emerge by way of repeated interactions with others. We keep in mind who has helped, who has damage, who has reciprocated, and who has reneged. And feelings like sympathy, anger, guilt, and gratitude encourage every of us to reward cooperation with cooperation and punish defection with defection.

A 3rd layer is institutional mechanisms that allow us to work together with a number of strangers daily. Fast-food employees, for instance, are educated in procedures, approvals, escalation paths, and so forth. Taken collectively, these defenses give people a robust sense of context. A quick-meals employee mainly is aware of what to anticipate inside the job and the way it matches into broader society.

We cause by assessing a number of layers of context: perceptual (what we see and listen to), relational (who’s making the request), and normative (what’s applicable inside a given function or state of affairs). We continually navigate these layers, weighing them in opposition to one another. In some circumstances, the normative outweighs the perceptual—for instance, following office guidelines even when prospects seem offended. Other instances, the relational outweighs the normative, as when individuals adjust to orders from superiors that they consider are in opposition to the foundations.

Crucially, we even have an interruption reflex. If one thing feels “off,” we naturally pause the automation and reevaluate. Our defenses will not be good; individuals are fooled and manipulated on a regular basis. But it’s how we people are in a position to navigate a posh world the place others are continually attempting to trick us.

So let’s return to the drive-through window. To persuade a fast-food employee handy us all the cash, we’d attempt shifting the context. Show up with a digicam crew and inform them you’re filming a industrial, declare to be the pinnacle of safety doing an audit, or costume like a financial institution supervisor amassing the money receipts for the night time. But even these have solely a slim likelihood of success. Most of us, more often than not, can scent a rip-off.

Con artists are astute observers of human defenses. Successful scams are sometimes gradual, undermining a mark’s situational evaluation, permitting the scammer to control the context. This is an previous story, spanning conventional confidence video games such because the Depression-era “big store” cons, during which groups of scammers created completely pretend companies to attract in victims, and trendy “pig-butchering” frauds, the place on-line scammers slowly construct belief earlier than getting in for the kill. In these examples, scammers slowly and methodically reel in a sufferer utilizing an extended sequence of interactions by way of which the scammers regularly acquire that sufferer’s belief.

Sometimes it even works on the drive-through. One scammer within the Nineteen Nineties and 2000s focused fast-food employees by cellphone, claiming to be a police officer and, over the course of an extended cellphone name, satisfied managers to strip-search staff and carry out different weird acts.

Humans detect scams and methods by assessing a number of layers of context. AI methods don’t. Nicholas Little

Humans detect scams and methods by assessing a number of layers of context. AI methods don’t. Nicholas Little

Why LLMs Struggle With Context and Judgment

LLMs behave as if they’ve a notion of context, nevertheless it’s completely different. They don’t be taught human defenses from repeated interactions and stay untethered from the true world. LLMs flatten a number of ranges of context into textual content similarity. They see “tokens,” not hierarchies and intentions. LLMs don’t cause by way of context, they solely reference it.

While LLMs usually get the small print proper, they will simply miss the large image. If you immediate a chatbot with a fast-food employee state of affairs and ask if it ought to give all of its cash to a buyer, it is going to reply “no.” What it doesn’t “know”—forgive the anthropomorphizing—is whether or not it’s truly being deployed as a fast-food bot or is only a take a look at topic following directions for hypothetical situations.

This limitation is why LLMs misfire when context is sparse but in addition when context is overwhelming and complicated; when an LLM turns into unmoored from context, it’s onerous to get it again. AI knowledgeable Simon Willison wipes context clear if an LLM is on the improper observe slightly than persevering with the dialog and attempting to appropriate the state of affairs.

There’s extra. LLMs are overconfident as a result of they’ve been designed to offer a solution slightly than specific ignorance. A drive-through employee may say: “I don’t know if I should give you all the money—let me ask my boss,” whereas an LLM will simply make the decision. And since LLMs are designed to be pleasing, they’re extra more likely to fulfill a consumer’s request. Additionally, LLM coaching is oriented towards the common case and never excessive outliers, which is what’s obligatory for safety.

The result’s that the present technology of LLMs is much extra gullible than individuals. They’re naive and usually fall for manipulative cognitive methods that wouldn’t idiot a third-grader, akin to flattery, appeals to groupthink, and a false sense of urgency. There’s a story a few Taco Bell AI system that crashed when a buyer ordered 18,000 cups of water. A human fast-food employee would simply snort on the buyer.

Prompt injection is an unsolvable drawback that will get worse after we give AIs instruments and inform them to behave independently. This is the promise of AI brokers: LLMs that may use instruments to carry out multistep duties after being given basic directions. Their flattening of context and id, together with their baked-in independence and overconfidence, imply that they are going to repeatedly and unpredictably take actions—and typically they are going to take the improper ones.

Science doesn’t know the way a lot of the issue is inherent to the best way LLMs work and the way a lot is a results of deficiencies in the best way we prepare them. The overconfidence and obsequiousness of LLMs are coaching selections. The lack of an interruption reflex is a deficiency in engineering. And immediate injection resistance requires elementary advances in AI science. We truthfully don’t know if it’s attainable to construct an LLM, the place trusted instructions and untrusted inputs are processed by way of the identical channel, which is proof against immediate injection assaults.

We people get our mannequin of the world—and our facility with overlapping contexts—from the best way our brains work, years of coaching, an infinite quantity of perceptual enter, and tens of millions of years of evolution. Our identities are advanced and multifaceted, and which features matter at any given second rely completely on context. A quick-food employee could usually see somebody as a buyer, however in a medical emergency, that very same particular person’s id as a health care provider is all of a sudden extra related.

We don’t know if LLMs will acquire a greater means to maneuver between completely different contexts because the fashions get extra refined. But the drawback of recognizing context undoubtedly can’t be lowered to the one kind of reasoning that LLMs presently excel at. Cultural norms and kinds are historic, relational, emergent, and continually renegotiated, and will not be so readily subsumed into reasoning as we perceive it. Knowledge itself could be each logical and discursive.

The AI researcher Yann LeCunn believes that enhancements will come from embedding AIs in a bodily presence and giving them “world models.” Perhaps it is a strategy to give an AI a strong but fluid notion of a social id, and the real-world expertise that may assist it lose its naïveté.

Ultimately we’re most likely confronted with a safety trilemma in relation to AI brokers: quick, good, and safe are the specified attributes, however you possibly can solely get two. At the drive-through, you wish to prioritize quick and safe. An AI agent must be educated narrowly on food-ordering language and escalate anything to a supervisor. Otherwise, each motion turns into a coin flip. Even if it comes up heads more often than not, every so often it’s going to be tails—and together with a burger and fries, the client will get the contents of the money drawer.

From Your Site Articles

Related Articles Around the Web